Schlagwort: TensorFlow

-

Fruit identification using Arduino and TensorFlow

Reading Time: 7 minutesBy Dominic Pajak and Sandeep Mistry Arduino is on a mission to make machine learning easy enough for anyone to use. The other week we announced the availability of TensorFlow Lite Micro in the Arduino Library Manager. With this, some cool ready-made ML examples such as speech recognition, simple machine vision and…

-

Get started with machine learning on Arduino

Reading Time: 12 minutesThis post was originally published by Sandeep Mistry and Dominic Pajak on the TensorFlow blog. Arduino is on a mission to make machine learning simple enough for anyone to use. We’ve been working with the TensorFlow Lite team over the past few months and are excited to show you what we’ve been…

-

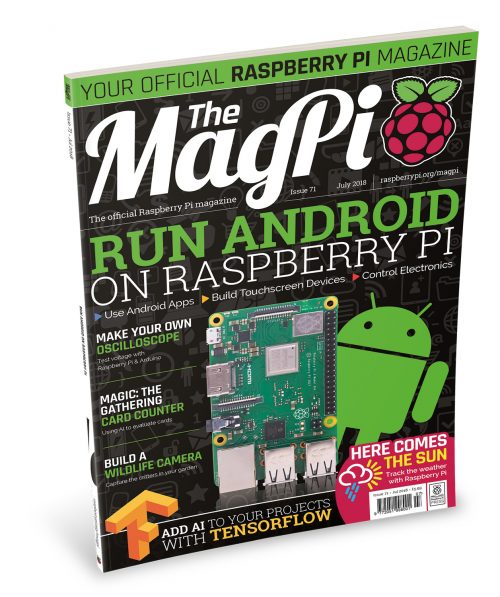

MagPi 71: Run Android on Raspberry Pi

Reading Time: 3 minutesHey folks, Rob here with good news about the latest edition of The MagPi! Issue 71, out right now, is all about running Android on Raspberry Pi with the help of emteria.OS and Android Things. Android and Raspberry Pi, two great tastes that go great together! Android and Raspberry Pi A big…

-

The deep learning Santa/Not Santa detector

Reading Time: 3 minutesDid you see Mommy kissing Santa Claus? Or was it simply an imposter? The Not Santa detector is here to help solve the mystery once and for all. Building a “Not Santa” detector on the Raspberry Pi using deep learning, Keras, and Python The video is a demo of my “Not Santa” detector…