Students from MIT have created a prototype 3D printed device, AlterEgo, that can recognize the words you mouth when silently talking to yourself and interpret them as commands.

Step aside Alexa, you’ve got some company. A computer interface devised by researchers at MIT can transcribe words that the user verbalizes internally but doesn’t actually speak aloud.

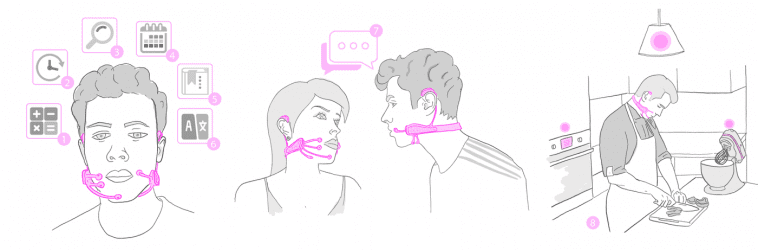

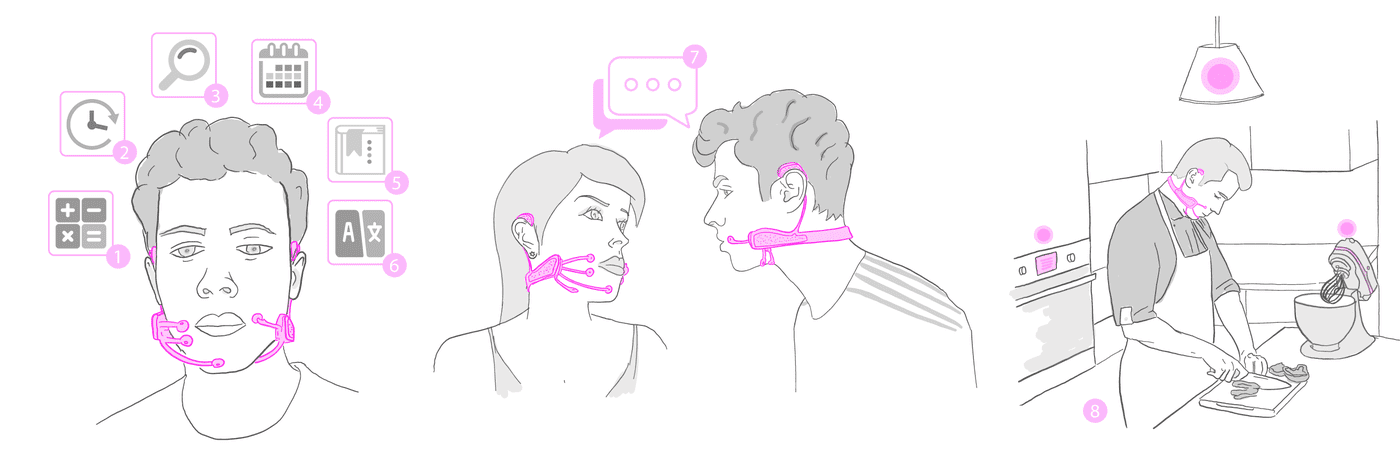

Dubbed AlterEgo, the prototype consists of a 3D printed wearable device and an associated computing system. Electrodes in the device pick up neuromuscular signals in the jaw and face that are triggered by internal verbalizations — saying words “in your head” — but are undetectable to the human eye.

The signals are then fed to a machine-learning system that has been trained to correlate particular signals with particular words.

The device also includes a pair of bone-conduction headphones, which transmit vibrations through the bones of the face to the inner ear. Because they don’t obstruct the ear canal, the headphones enable the system to convey information to the user without interrupting conversation or otherwise interfering with the user’s hearing.

AlterEgo is effectively a complete silent-computing system that lets the user undetectably pose and receive answers to difficult computational problems.

In one of the researchers’ experiments, for example, subjects used the system to silently report an opponent’s moves in a chess game — and just as quietly receive computer-recommended responses. That’s cheating!

AlterEgo Wearable Builds on Subtle Signals

“The motivation for this was to build an IA device — an intelligence-augmentation device,” says Arnav Kapur, a graduate student at the MIT Media Lab, who led the development of the new system.

“Our idea was: Could we have a computing platform that’s more internal, that melds human and machine in some ways and that feels like an internal extension of our own cognition?”

Using the prototype wearable, the team conducted a usability study in which 10 subjects spent about 15 minutes each customizing the arithmetic application to their own neurophysiology, then spent another 90 minutes using it to execute computations. In that study, the system had an average transcription accuracy of about 92 percent.

But, Kapur says, the system’s performance should improve with more training data, which could be collected during its ordinary use. He estimates that the better-trained system he uses for demonstrations has an accuracy rate higher than that reported in the usability study.

In ongoing work, the researchers are collecting a wealth of data on more elaborate conversations. The goal is to build applications with much more expansive vocabularies.

“We’re in the middle of collecting data, and the results look nice,” Kapur says. “I think we’ll achieve full conversation some day.”

Source: MIT News

Website: LINK